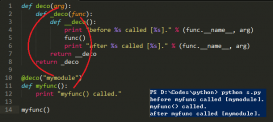

本文实例讲述了Python打印scrapy蜘蛛抓取树结构的方法。分享给大家供大家参考。具体如下:

通过下面这段代码可以一目了然的知道scrapy的抓取页面结构,调用也非常简单

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

|

#!/usr/bin/env pythonimport fileinput, refrom collections import defaultdictdef print_urls(allurls, referer, indent=0): urls = allurls[referer] for url in urls: print ' '*indent + referer if url in allurls: print_urls(allurls, url, indent+2)def main(): log_re = re.compile(r'<GET (.*?)> \(referer: (.*?)\)') allurls = defaultdict(list) for l in fileinput.input(): m = log_re.search(l) if m: url, ref = m.groups() allurls[ref] += [url] print_urls(allurls, 'None')main() |

希望本文所述对大家的Python程序设计有所帮助。