本文介绍了OpenCV python sklearn随机超参数搜索的实现,分享给大家,具体如下:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

|

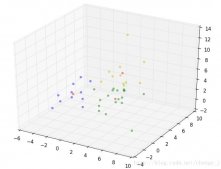

"""房价预测数据集 使用sklearn执行超参数搜索"""import matplotlib as mplimport matplotlib.pyplot as pltimport numpy as npimport sklearnimport pandas as pdimport osimport sysimport tensorflow as tffrom tensorflow_core.python.keras.api._v2 import keras # 不能使用 pythonfrom sklearn.preprocessing import StandardScalerfrom sklearn.datasets import fetch_california_housingfrom sklearn.model_selection import train_test_split, RandomizedSearchCVfrom scipy.stats import reciprocalos.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'assert tf.__version__.startswith('2.')# 0.打印导入模块的版本print(tf.__version__)print(sys.version_info)for module in mpl, np, sklearn, pd, tf, keras: print("%s version:%s" % (module.__name__, module.__version__))# 显示学习曲线def plot_learning_curves(his): pd.DataFrame(his.history).plot(figsize=(8, 5)) plt.grid(True) plt.gca().set_ylim(0, 1) plt.show()# 1.加载数据集 california 房价housing = fetch_california_housing()print(housing.DESCR)print(housing.data.shape)print(housing.target.shape)# 2.拆分数据集 训练集 验证集 测试集x_train_all, x_test, y_train_all, y_test = train_test_split( housing.data, housing.target, random_state=7)x_train, x_valid, y_train, y_valid = train_test_split( x_train_all, y_train_all, random_state=11)print(x_train.shape, y_train.shape)print(x_valid.shape, y_valid.shape)print(x_test.shape, y_test.shape)# 3.数据集归一化scaler = StandardScaler()x_train_scaled = scaler.fit_transform(x_train)x_valid_scaled = scaler.fit_transform(x_valid)x_test_scaled = scaler.fit_transform(x_test)# 创建keras模型def build_model(hidden_layers=1, # 中间层的参数 layer_size=30, learning_rate=3e-3): # 创建网络层 model = keras.models.Sequential() model.add(keras.layers.Dense(layer_size, activation="relu", input_shape=x_train.shape[1:])) # 隐藏层设置 for _ in range(hidden_layers - 1): model.add(keras.layers.Dense(layer_size, activation="relu")) model.add(keras.layers.Dense(1)) # 优化器学习率 optimizer = keras.optimizers.SGD(lr=learning_rate) model.compile(loss="mse", optimizer=optimizer) return modeldef main(): # RandomizedSearchCV # 1.转化为sklearn的model sk_learn_model = keras.wrappers.scikit_learn.KerasRegressor(build_model) callbacks = [keras.callbacks.EarlyStopping(patience=5, min_delta=1e-2)] history = sk_learn_model.fit(x_train_scaled, y_train, epochs=100, validation_data=(x_valid_scaled, y_valid), callbacks=callbacks) # 2.定义超参数集合 # f(x) = 1/(x*log(b/a)) a <= x <= b param_distribution = { "hidden_layers": [1, 2, 3, 4], "layer_size": np.arange(1, 100), "learning_rate": reciprocal(1e-4, 1e-2), } # 3.执行超搜索参数 # cross_validation:训练集分成n份, n-1训练, 最后一份验证. random_search_cv = RandomizedSearchCV(sk_learn_model, param_distribution, n_iter=10, cv=3, n_jobs=1) random_search_cv.fit(x_train_scaled, y_train, epochs=100, validation_data=(x_valid_scaled, y_valid), callbacks=callbacks) # 4.显示超参数 print(random_search_cv.best_params_) print(random_search_cv.best_score_) print(random_search_cv.best_estimator_) model = random_search_cv.best_estimator_.model print(model.evaluate(x_test_scaled, y_test)) # 5.打印模型训练过程 plot_learning_curves(history)if __name__ == '__main__': main() |

以上就是本文的全部内容,希望对大家的学习有所帮助,也希望大家多多支持服务器之家。

原文链接:https://blog.csdn.net/weixin_45875105/article/details/104008975